Product Requirements Document (PRD)

Categories:

Product Requirements Document (PRD)

Project Name: Peregrine

Feature/Version Name:[Name of the feature or version being described]

1. Introduction

This is a product requirements document that outlines high level feature set for the entire Peregrine version of the Phenom app.

1.1 Purpose: To enumerate critical functionality for Phenom, a competitive gamified app for capturing UAP/Cryptids/Paranormal.

1.2 Scope: App will be distributed to Android and iPhone user platforms

1.3 Goals: Monetized gamified video capture that is undebunkable (meaning tagged with c2pa standard) and annotated for further study.

1.4 General terms

Phenom - general term for a user recorded event

Teams - users can form teams to record things together

2. User Stories (Issue References Only)

- Please provide links to existing User Story issues in Linear.

- [Add more User Story issue links]

3. Functional Requirements

App Frontend Features

3.1 Video Capture

- single button to record video

- Preserves C2PA metadata when recording is collected.

- Pair video and audio with sensor metrics from any other sensors attached (See Sensor Usage)

- Display other internal sensor data over screen as buzzard does

- Camera Modes

- Recording tips on/off

- tell user to stop shaking

- tell user to record some terrain

- ID Augmented Reality Mode: AR mode with all the satellites, planes, and so on. Just to check what is in the sky.

- Live Capture Mode: Turns off ID Augmented Reality view. Engages recording with ‘zen focus’ effect.

- Test Capture: same as Capture, but without saving the data to Phenom.earth cloud storage. Provide a browse, share to The Phenom App and delete test files feature.

- Recording tips on/off

- segment known items such as

- satellites

- planes

- ships

- planets

- meteors

- AR overlay is available before and after recording, can be toggled.flowchart TD A([User Presses Record]):::action --> B([Vision Camera v5]):::tech B --> C([Sensor Data Collection

GPS, Gyro, Compass]):::tech B --> D([Video Stream]):::tech C --> E([C2PA Metadata

Embedding]):::feature D --> E E --> F([Post-Recording

Edit Screen]):::action F --> G([Upload to

Cloud Storage]):::deploy classDef action fill:#d73429,stroke:#ffffff,color:#ffffff,rx:30 classDef tech fill:#121010,stroke:#a5e3e8,color:#a5e3e8,rx:30 classDef feature fill:#151515,stroke:#d73429,color:#ffffff,rx:30 classDef deploy fill:#1a1a1a,stroke:#a5e3e8,color:#ffffff,rx:30

- segment known items such as

3.2 post recording editing screen

- allow clipping of video before upload to The Phenom App cloud storage etc.

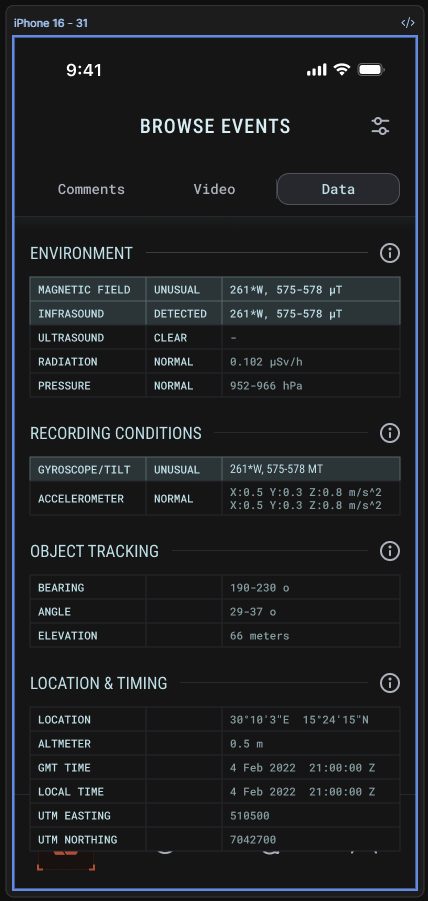

3.3 Data display and interaction

- Summary screen of video (once recorded)

- 3D globe or map view showing events as well as data layers such as Airplane and Satellite traffic that happened during video

- show stats such as bearing and location

- video metrics

- Event viewing tool

- normal playback screen

- ability to overlay other known objects (see AR recording mode)

- possible data sources

- ADSB (airplanes)

- AIS (ships)

- More..?

- could be planetarium style view

- could be premium feature

- possible data sources

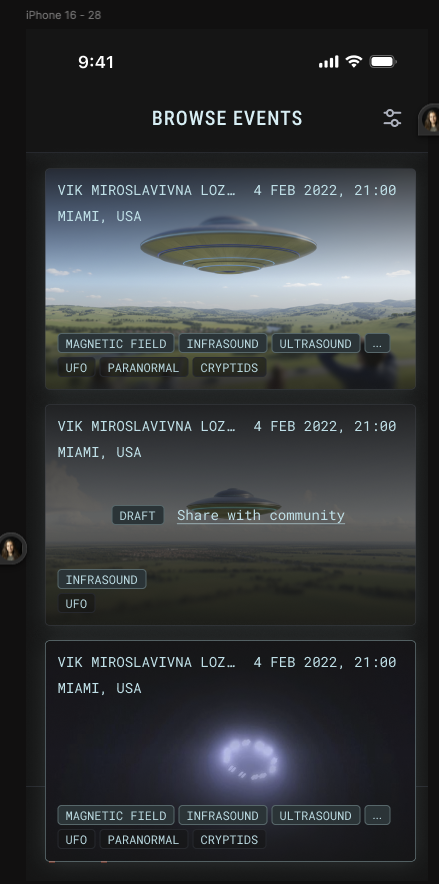

- The Phenom App Content Navigation Screen

- 3D globe as is currently done in Enigma or Google Earth

- magnifying glass in upper right

- allows search by things like

- username, team, tag

- allows search by things like

- 2 major range sliders, distance and time

- distance slider to adjust show all events within X km/miles from pin drop (defaults to user GPS locale)

- Calendar icon to select date + time slider for specified time period preceding date.

- magnifying glass in upper right

- 3D globe as is currently done in Enigma or Google Earth

- The Phenom App Data Visualization Features

- Turn on Heatmaps

- filter by tag

- include 3 heatmap mode checkboxes UAP/cryptid/paranormal.

- Turn on Event Altitude Stick - applicable when 2 users capture an overlapping event. (see growth section)

- Investigation Incident Command View - Shows all resources aligned with a specific investigation event. This could be Phenom App Team members, sensor packages, for planned observation events.

- Turn on Heatmaps

- Summary screen of video (once recorded)

3.4 User interaction rewards

- users who record multiple views of same event can get rewards

- users who record lots of events can get rewards as well

- rankings

3.5 Education

- onboarding screen and test area

- Educational materials on how to film properly.

- could be quick through tutorial that loads on app install

- could be recording a bird for example.

- Test recording walk-through of using the app. Video doesn’t upload to the database.

- Types of observed UAP (descriptors or tags of observed shapes)

- Types of observed Cryptids

- Types of paranormal observations

- onboarding screen and test area

3.6 Phenom 15 Second Preview of Recent Activity

3.7 Equipment store and purchase

- Links to buy additional sensors and equipment

- links to buy tshirts and swag

3.8 User profiles

name

address

profession

etc

App Backend Features

3.10 Backend data sharing API

- Should be compatible to share to other orgs (NASA whatever)

- offer 3rd party integrations in a limited fashion. Possible integration techniques:

- webhook?

- RSS?

- Other DBs:

- MUFON

- NUFORC

3.11 General app backend

- Data is annotated and curated to suit further API-based analysis.

- Desktop website features

- Reproduces all Data Viz available in the mobile app.

- Growth ideas

- grouping videos into events automatically

- how do we figure out if users are seeing the same thing?

- how do we run detailed automated analysis on multiple videos (sensor fusion)

- doing trajectory extrapolation to notify people that something might be coming

- could be triggered in high volume event cases

- we should think about ways to facilitate this

- grouping videos into events automatically

6. Non-Functional Requirements

4.1 Performance:

- App must maintain 60fps during camera recording to ensure smooth capture of fast-moving phenomena.

- Map rendering must handle 1000+ H3 hexagons without frame drops or UI jank.

- Video processing (FFmpeg operations, C2PA signing) must run off the UI thread to keep the interface responsive.

- MMKV storage is used for fast local data access, replacing AsyncStorage for performance-critical paths.

4.2 Security:

- C2PA (Coalition for Content Provenance and Authenticity) content credentials are embedded in all captured media to establish authenticity and chain of custody.

- MMKV encrypted storage is used for sensitive local data (tokens, user preferences).

- Authentication is handled via OAuth 2.0 through AWS Cognito.

- In-app messaging uses the Matrix protocol, which provides end-to-end encryption and decentralized architecture.

4.3 Usability:

- The app supports 7 languages: English, Spanish, French, German, Japanese, Chinese, and Arabic (with full RTL layout support).

- Video capture uses a single-button interaction to minimize complexity during time-sensitive recording situations.

- The camera HUD displays a compass and live orientation data (pitch, roll, yaw) to assist with directional documentation.

- UI components follow platform accessibility guidelines (iOS Human Interface Guidelines, Android Material Design) for screen reader and contrast compliance.

4.4 Scalability:

- H3 hexagonal geospatial indexing is used for spatial queries, enabling efficient global-scale event lookups at multiple resolution levels.

- The API layer uses an adapter pattern, allowing the backend to be swapped or extended without rewriting the frontend.

- Hasura GraphQL with PostgreSQL provides a scalable relational data layer capable of handling concurrent submissions from a large user base.

7. UI/UX Design

Figma: Design assets and component libraries are maintained in the team Figma workspace. Reference Figma for approved layouts, color tokens, spacing, and component specifications before implementing any new screens.

Key UI/UX considerations:

- The camera HUD is rendered using Skia (via React Native Skia) to achieve high-performance, hardware-accelerated overlays without impacting the camera pipeline.

- The app uses a dark theme optimized for low-light and night sky observation scenarios, reducing eye strain and improving screen visibility in dark environments.

- Bottom tab navigation provides access to the five primary areas: Home, Map, Record, Sensors, and Chat.

- Design tokens (colors, typography, spacing) are kept in sync between the Figma source of truth and the codebase to prevent visual drift across releases.

8. Technical Requirements

- App should record battery level voltage as an internal logging function.

- React Native 0.81.5 with Expo SDK 54.0.31 is the application framework.

- TypeScript is required throughout the codebase for type safety and maintainability.

- EAS (Expo Application Services) is used for managed builds and over-the-air update delivery.

- Vision Camera v5 provides frame-level camera control required for the recording HUD and AR overlays.

- FFmpeg Kit handles all video processing operations (trimming, re-encoding, C2PA injection) off the main thread.

- Mapbox GL powers the 3D globe and 2D map views with support for custom data layers.

- Matrix JS SDK is integrated for secure, encrypted in-app team messaging.

9. Release Criteria

- All critical user flows are functional end-to-end: record a phenom, view event details, use team chat, and browse the map.

- C2PA metadata is successfully embedded and verifiable in all captured media before upload.

- Sensor data (compass, accelerometer, GPS) is correctly paired and stored alongside video recordings.

- App crash rate does not exceed 1% as measured in production telemetry.

- TestFlight (iOS) and Google Play internal testing (Android) beta rounds are complete with no blocking issues outstanding.

- Performance benchmarks are met: sustained 60fps during recording and app cold-launch time under 3 seconds on reference devices.

10. Open Issues/Questions

- AR overlay data source licensing: usage rights for ADSB (aircraft), AIS (maritime), and satellite position feeds need legal review before public launch.

- Monetization model: the pricing and gating strategy for premium features (heatmaps, event altitude stick, advanced visualization) has not been finalized.

- Data sharing agreements with MUFON and NUFORC are pending; the format and frequency of data exports need to be negotiated.

- Event auto-grouping algorithm design is unresolved — the approach for determining whether multiple user recordings capture the same phenomenon (sensor fusion, location overlap, timestamp correlation) requires further technical design work.

11. Notes/Ideas

- Desktop website feature parity (section 4) is planned as a post-mobile-launch milestone. The mobile app is the initial delivery target; web data visualization will follow.

- Growth features listed in section 5 (trajectory extrapolation, automated event grouping) are explicitly post-MVP. They should not gate the initial release.

- As UAP reporting standards evolve (e.g., through government or academic bodies), consider building integration hooks early so the platform can participate in emerging data exchange protocols without requiring a major rearchitecture.

Feedback

Was this page helpful?

Glad to hear it! Please tell us how we can improve.

Sorry to hear that. Please tell us how we can improve.